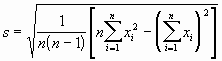

Standard Deviation Formula

by Chandan

(BBSR)

Standard Deviation Formula

What is the formula for standard deviation?

Answer

The illustration shows the equation for standard deviation. The standard deviation is a way to measure how much a set of data varies from its mean or average.

Standard Deviation

by Dev Dutt Sharma

(Faridabad)

What is the definition of standard deviation?

Comments for Standard Deviation

|

||

|

||

what is standard deviation

by Vijay

(Mumbai)

Please explain what standard deviation really is.

Answer:

Dee Reavis

Standard deviation is simply a measure of the variability of your data. As you know the mean or average is a measure of the central tendency of your data. The standard deviation gives you a way to tell what distance the data points will likely be from your data set mean.

For example we might have 2 data sets that we assume to be normally distributed. Both have a mean of 10, but one has a SD of 1 and the other has a SD of 2. If we were to graph the data point occurances of the two data sets, the first would be thinner than the second because with the smaller SD the data points would more likely occur closer to the mean.

Standard Deviation Math

by Marleah

(Mississippi)

If my mean is 25, the oldest age is 49 and the standard deviation is 5.9, how do you tell how many standard deviations 49 is away from the mean?

Answer:

The data point is x standard deviations away, where x=(data point - mean)/standard deviation

Here is the math:

(49-25)/5.9=4.07 standard deviations from the mean.

Standard Deviations

by Viren Sahi

(Panipat, Haryana)

What is the business use of standard deviations?

Answer

Standard deviations helps you to understand your data. It's business use can best be understood by an example.

Suppose that you have a production line making widgets. After analyzing and recording the data from your production line you determine that your average defects for a production day of 10,000 units is 5(from say a years worth of data). You also calculate the standard deviation and determine that it is 1. You would probably assume that your distribution is normal. If you get 8 or more defects you will begin to suspect that something has changed in your production line, because your probability of getting 8 defects in a day is less than 1%.See Normal Probability Distributions